The purpose of the TinyLlama model is to serve as a versatile platform for enabling end-user applications on mobile devices and for testing a wide range of innovative ideas related to language models.

Introduction

A few months ago Microsoft open-sourced the Orca Small Language Model (SLM). What was really impressive of the Orca SLM was its ability to perform complex reasoning with succinct and accurate answers.

To achieve this, nuanced training data was created in the following way… an LLM is presented with intricate prompts which is designed with the intention to elicit strategic reasoning patterns which should yield more accurate results.

Also, during the training phase, the smaller model is exposed to the task and the subsequent output from the LLM. The output data of the LLM defines how the LLM went about in solving the problem.

But here is the catch, the original prompt is not shown to the SLM.

This approach of Prompt Erasure, is a technique which turns Orca-2 into a Cautious Reasoner because it learns not only how to execute specific reasoning steps, but to strategise at a higher level how to approach a particular task.

Rather than naively imitating powerful LLMs, the LLM is used as a reservoir of behaviours from which a judicious selection is made for the approach for the task at hand.

Consider the reasoning task given to Orca, as seen below…Orca really excels at reasoning tasks.

Solve the following problem by thinking step by step:

John and Mark are in a room with a ball, a basket and a box.

John puts the ball in the box, then leaves for work. While John is away,

Mark puts the ball in the basket, and then leaves for school.

They both come back together later in the day, and they do not know what

happened in the room after each of them left the room.

Where do they think the ball is?

And the output from Orca:

To solve this problem step by step, let's analyze the events that occurred while John and Mark were away:

John put the ball in the box before leaving for work.

While John was away, Mark came into the room and placed the ball in the basket.

Both John and Mark left the room without knowing what happened after they put the ball in its respective location.

They both returned to the room together later in the day.

Since neither John nor Mark saw any changes after they put the ball in the box and the basket, they would naturally assume that the ball is still in the location they left it in. In this case, John would think the ball is still in the box, and Mark would think the ball is still in the basket.

As a result, John thinks the ball is in the box, and Mark thinks the ball is in the basket.

Back To TinyLlama

TinyLlama is a compact 1.1B Small Language Model (SLM) pre-trained on around 1 trillion tokens for approximately 3 epochs.

Despite its relatively small size, TinyLlama demonstrates remarkable performance in a series of downstream tasks. It significantly outperforms existing open-source language models with comparable sizes.

TinyLlama has the capacity to facilitate end-user applications on mobile devices and acts as a nimble platform for exploring a myriad of inventive concepts associated with language models.

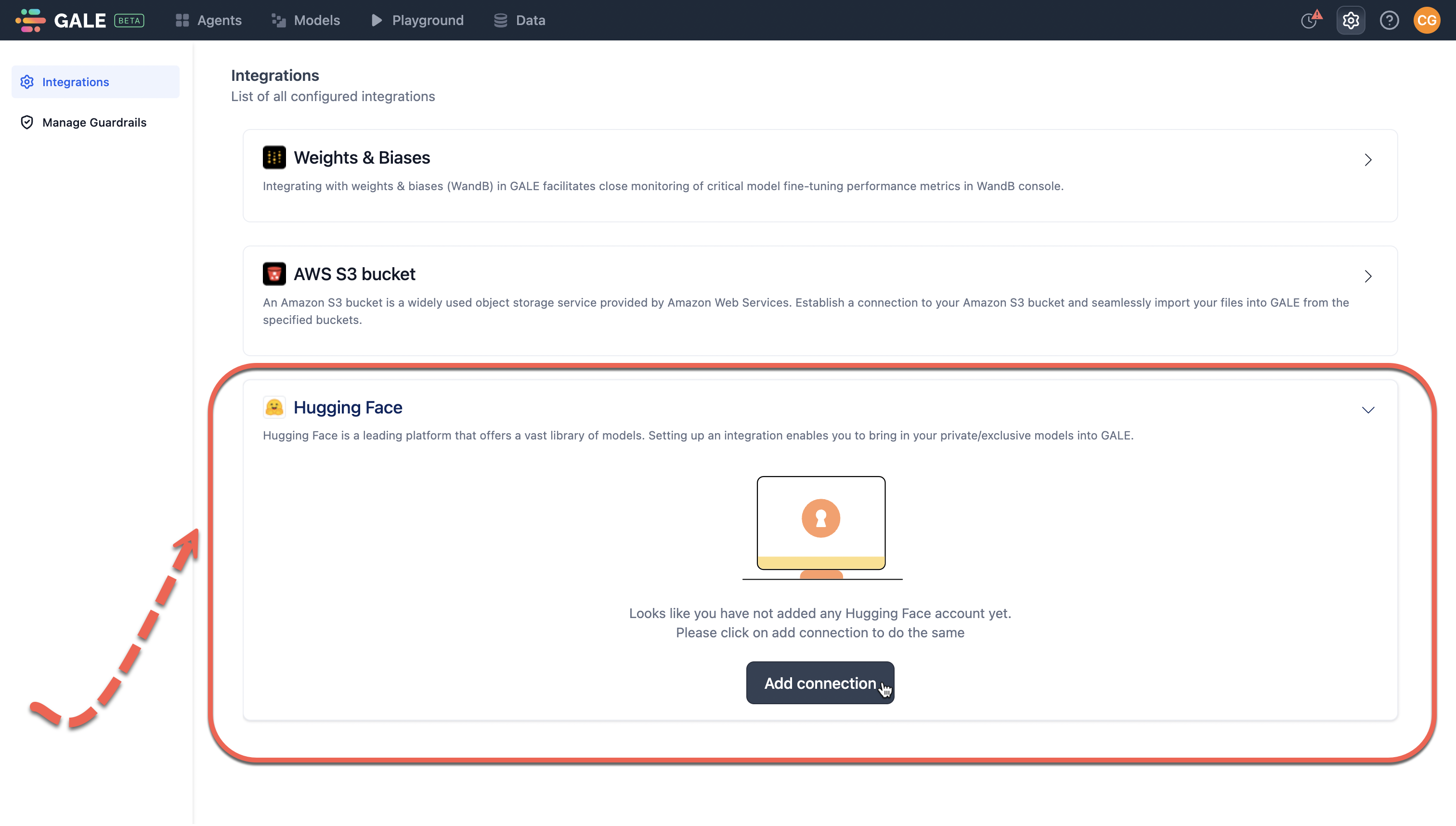

Considering the image below, the TinlyLlama small language model (SML) can be deployed via a framework like GALE in a no-code fashion. The integration GALE has to HuggingFace ensures access to a whole host of models.

Deploying within GALE ensures a user has a safe, secure and private instance of the SLM. This approach allows for scaleability, enterprise grade use and implementations and solving for inference latency.

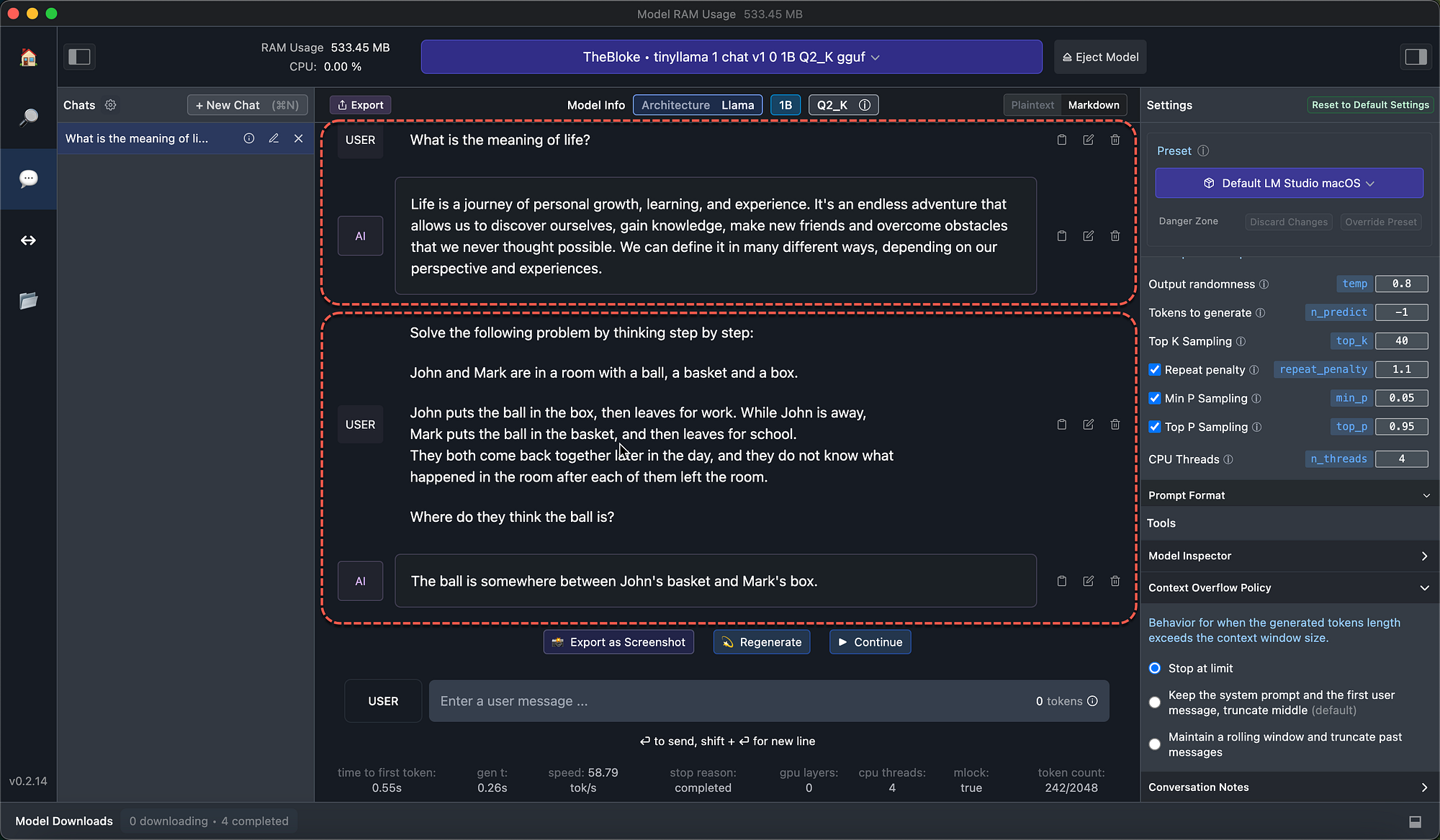

Considering the image below, I loaded chat version of TinlyLlama within LM Studio. you can see how well an abstract question is answered.

Also, when I asked TinyLlama a rather complex reasoning question, the answer was not as good as Orca-2, but quite impressive none-the-less.

The objective was to enhance TinyLlama’s functionality by incorporating a broad spectrum of capabilities. These enhancements will bolster its effectiveness and adaptability across a range of tasks.

In Conclusion

The purpose of the TinyLlama model is to serve as a versatile platform for enabling end-user applications on mobile devices and for testing a wide range of innovative ideas related to language models.

It aims to provide a lightweight solution that can be easily integrated into various applications and scenarios, allowing developers to explore and experiment with different approaches and functionalities in natural language processing tasks.

Additionally, TinyLlama aims to continuously improve its capabilities and performance based on the insights gained during its development and usage, with the goal of enhancing its utility and versatility across different tasks and applications.

Previously published on Medium.

I’m currently the Chief Evangelist @ Kore AI. I explore & write about all things at the intersection of AI & language; ranging from LLMs, Chatbots, Voicebots, Development Frameworks, Data-Centric latent spaces & more.